Distributed processing using parallelize.Related: How to run Pandas DataFrame on Apache Spark (PySpark)? Featuresįollowing are the main features of PySpark. PySpark has been used by many organizations like Walmart, Trivago, Sanofi, Runtastic, and many more. Also used due to its efficient processing of large datasets. PySpark is very well used in Data Science and Machine Learning community as there are many widely used data science libraries written in Python including NumPy, TensorFlow.

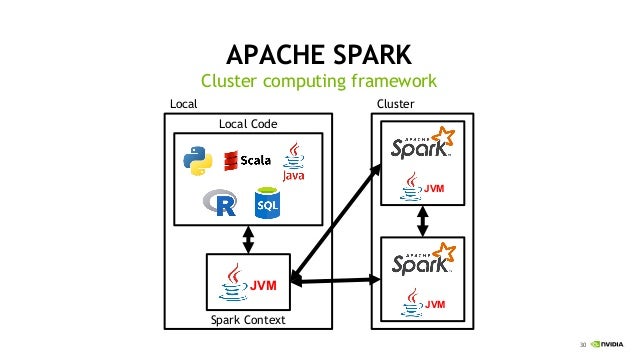

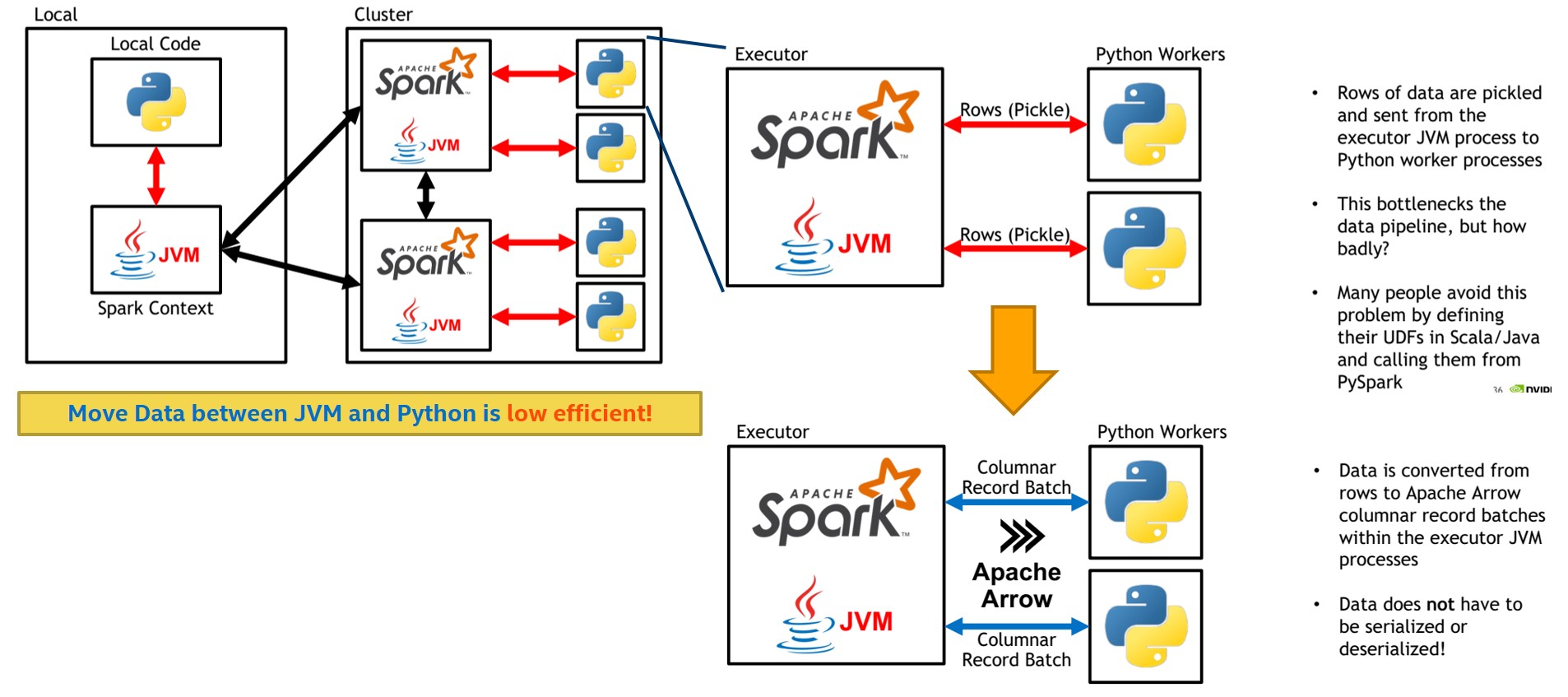

Apache Spark is an analytical processing engine for large scale powerful distributed data processing and machine learning applications. In other words, PySpark is a Python API for Apache Spark. PySpark is a Spark library written in Python to run Python applications using Apache Spark capabilities, using PySpark we can run applications parallelly on the distributed cluster (multiple nodes). Before we jump into the PySpark tutorial, first, let’s understand what is PySpark and how it is related to Python? who uses PySpark and it’s advantages.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. Archives

March 2023

Categories |

RSS Feed

RSS Feed